Powerful tool to quickly create items for publications in Wikidata

This is a great example of why I love the WikiCite community. At WikiCite 2017 a group of people decided to write a zotero translator for the Wikidata community.

This is a great example of why I love the WikiCite community. At WikiCite 2017 a group of people decided to write a zotero translator for the Wikidata community.

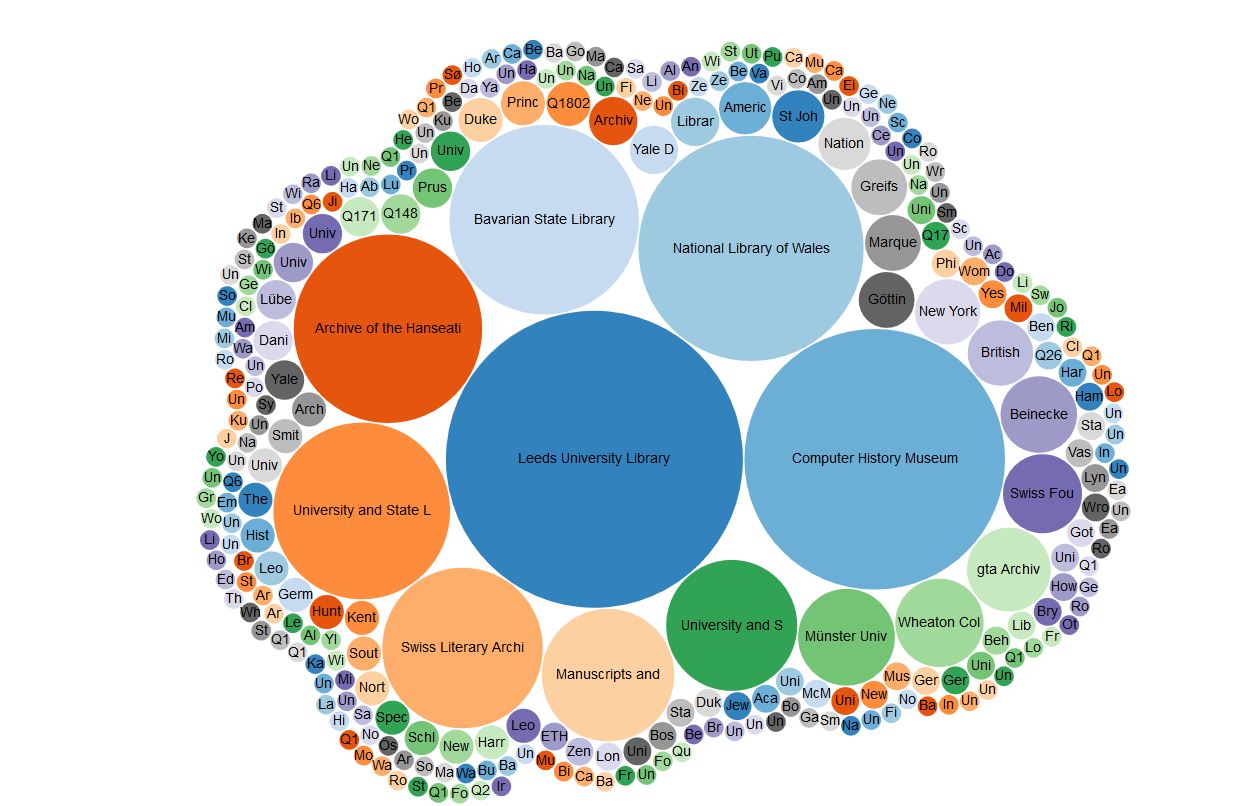

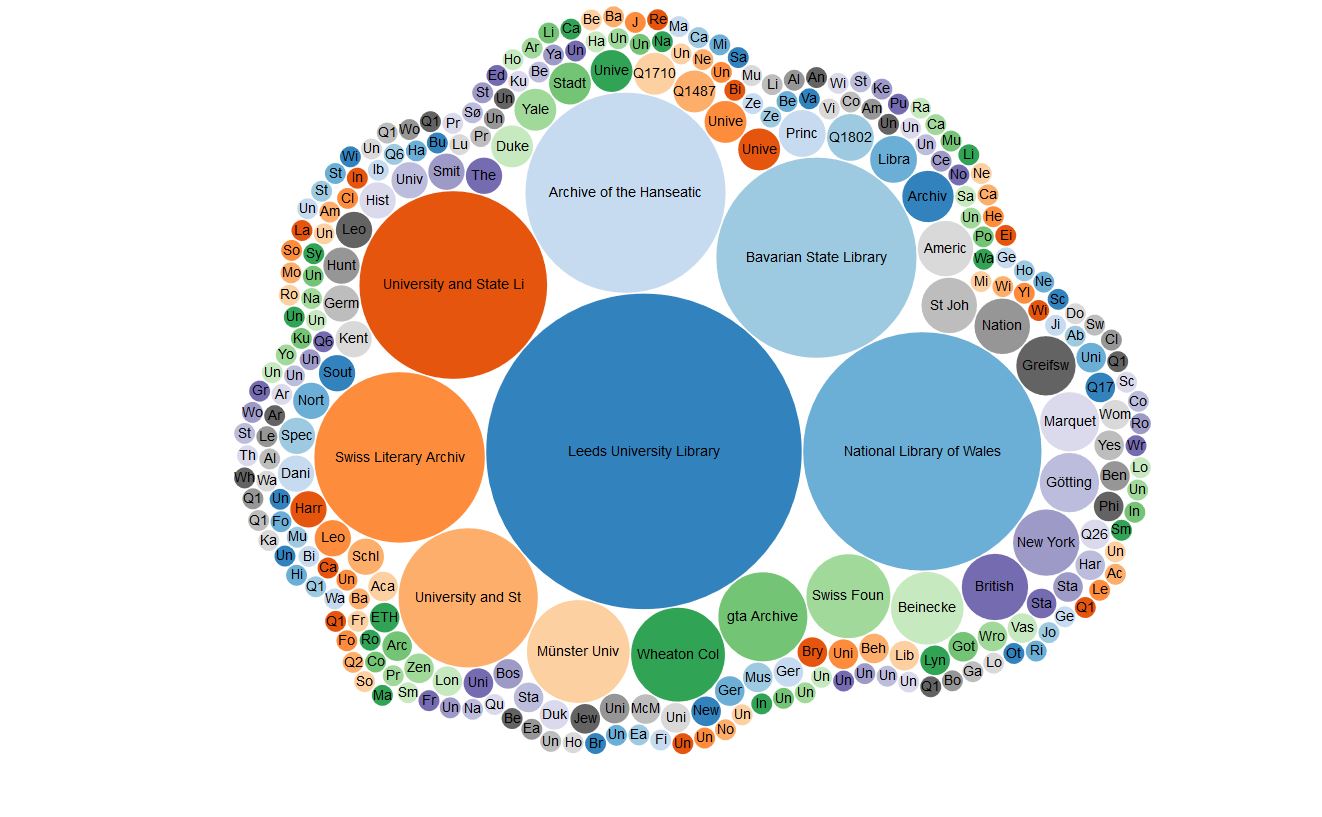

Last week I had the opportunity to learn about a data archive at the Institution for Social and Policy Studies at Yale University. The archive is well-curated, and has a lot of metadata about data files that they house, such as supporting data sets or replication materials related to published papers and books that ISPS-affiliated scholars have created.

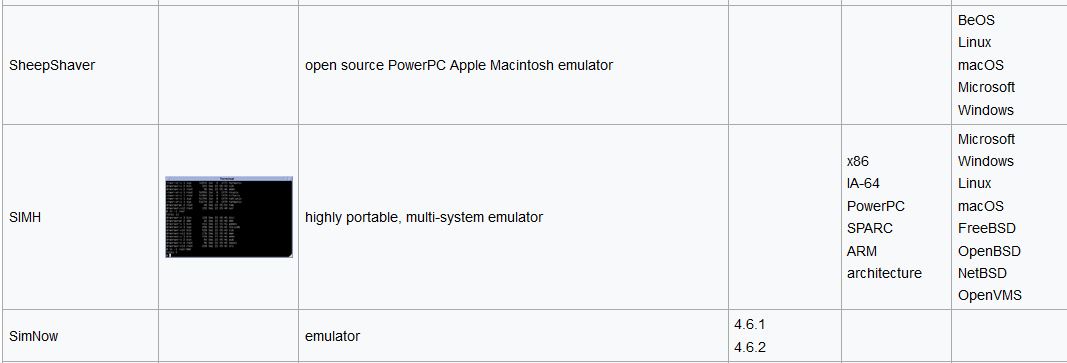

This week I read about the zotero translator and I wanted to try it out. Thank you very much, zotkat! This translator meets the needs of people who want a semi-automated way to quickly create items of publications of many varieties.

If you run this query on the Wikidata Query Service then you will be able to explore the items for publications and explore the supporting data files by following the links to where the data is stored at ISPS.