Like Mary mentioned, we’re in! Migration was a five-week process that involved a lot of on-the-fly problem solving and the chance to really engage with how ArchivesSpace works. It also required setting alarms to check on scripts at 2:00 am. Thank goodness we’re done.

We work in a large, complex organization with a lot of local requirements. We also monkeyed around with AT quite a bit, and are moving into an ArchivesSpace environment with a lot of plug-ins. For most folks, it will be a lot easier than this. Here’s my documentation of what we’ve done and what I would think about doing differently if I were to do this again.

Reflections on open-source software

As often happens, the folks at the Bentley said it already and best — open-source projects let us move together as a community that cares about manifesting professional theory and principles in software. We all need software in order to support our work. We’re all used to putting resources toward tools that support our work, no matter our budgets. I hope that we can find a way to think of ourselves of members of this process of moving forward and see ourselves less as customers, or powerless consumers of this technology.

In order for this to work, we’re going to need really good leadership and coordination from our friends at Lyrasis. Wouldn’t it be great to see a three-year plan of what the software should be able to do, which individual institutions could then put resources toward realizing? I could imagine a process, too, where if an institution has an idea for a feature and plans to fund development, they could bring this idea to an ArchivesSpace governance group and know, right off the bat, whether this can be done as a part of the core code or should be maintained as a plug-in.

Our migration process was so complicated because we had to accommodate a number of plug-ins and data changes. It was also really fun — we had the opportunity to engage with our community about how our work should be supported, how our users should consume our data, and how we can do this in a way that is elegant and in alignment with professional standards. Also, we were lucky to work with extremely competent and intelligent developers, which made the whole process all the more fun.

What it may be like for you when you migrate

If you’ve never gone rogue in AT or Archon, you can expect a lot fewer steps. I would still recommend the following:

- Get comfortable with the data. Do some practice migrations. Sit down with someone who knows how to query databases (this someone may be yourself — it really ain’t that hard and google can talk you through, or use this cheatsheet I made for my colleague) and see how your data is going to be stored in ArchivesSpace vs. Archivists’ Toolkit (or Archon, or your EADs and accessions spreadsheets).

- Document anything that seems like a mistake in your migration and write in to the ArchivesSpace listserv to report systemic problems. I’m very impressed with the architecture behind ArchivesSpace, but I think that the migrator was under-tested. This is FOSS and can still be improved, but we need engagement from users in order to improve it.

- Create an organizational situation where this isn’t just one person’s problem. Make sure that everyone in your repository knows what’s coming, get them into the guts of it, and get some help with any clean-up projects you may want to do.

- Iterate!!!! Be proud that you have data in structured form instead of sitting in paper finding aids. It’s okay that not everything’s perfect right now — figure out what YOU need in order to meet a baseline of collection control and user access. Target those areas. Don’t fuss over style.

- Communicate about when the migration will happen. Give yourself a week with NO data updates in AT or ASpace so that you can do the migration, check that it went well, and do some testing. By this point you should have done enough test migrations that there will be no surprises, but you definitely don’t want to feel rushed.

- Set up a service-level agreement between yourself and IT. How often will back-ups happen? How quickly will they respond to requests? What will they do if it seems like ArchivesSpace needs more server resources?

- Have a plan for training users in ArchivesSpace. Encourage them to play in the sandbox before migration to get used to the look and feel of using the application.

- Have a plan for what you want to clean up in AT before migration (this may be nothing)

- Make a copy of your AT database

- Run the migration!

- Do more database queries to make sure that the data migrated the way that you want it to.

- Tell your colleagues to get excited, because they’re now in ArchivesSpace!

What it looked like when we migrated

The mechanics of the migration

We migrated four Archivists’ Toolkit databases to one ArchivesSpace database. This is not, technically, a recommended pathway for the AT migrator tool. When we started, this was actually not even possible. The migrator would throw record save errors when a resource was associated with a subject or name already in the database, resulting in a huge percentage of our corpus never migrating. Furthermore, we were having a very hard time getting migrations to finish — we were having Java heap space errors, despite working on machines with a ton of memory and setting the heap space variable in AT to as high as seemed prudent. It turned out that the migrator itself had a memory leak.

We contracted with Hudson Molonglo to fix the migrator, which squashed both of those bugs. My understanding is that these changes will be implemented into the core ASpace project soon.

Since we don’t have permission to access the servers at Yale on which ArchivesSpace would be hosted, we decided to do all migrations locally. The idea here was that we would have complete access to and control over all configuration settings and that we wouldn’t have to worry about network problems over the course of very long migration scripts.

Pulling down a copy of the database

The AT database is a record of our work, which will be accessioned into each of our respective repositories. For this reason, we wanted to make any pre-migration changes to a copy of the database, not the database itself, and do migration from this copy.

We exported these databases using the mysqldump program. You can also make a copy with SQL interface tools like MySQL workbench or (Mark’s favorite) HeidiSQL. Before working on this, I had never installed MySQL. It’s actually not too hard and either google or your local sysadmin could walk you through in about an hour.

Something that took me WAY TOO LONG to figure out that may save someone else some hassle — if you try to load your exported database to a new schema and you get an error like “Lost connection to MySQL database”, you might think that there’s something wrong with your connection to your database or how you set up MySQL (especially if it’s over a network). I eventually figured out that there was actually a field in the digital objects table that was larger than the max packet size, and I needed to change that variable. Here’s how to do that:

log in to mysql mysql -u root -p set global max_allowed_packet=268435456; (make it as big as you want) log out log in show variables like 'max_allowed_packet'; (make sure this matches what you had set)

I also got into the habit of making lots of back-ups after each step, in case I were to foul up the next one and I didn’t want to start from the beginning and wait for long scripts to run.

AT data enhancement before migration

Before migration, we ran SQL scripts on copies of our AT databases to clean up some data issues and make some changes that we had been keen to make for a while.

- ns2 prefixes needed to be changed to xlink

- all instance types should be “mixed materials” (because it’s frankly bonkers to give a container a type — that’s not how you describe carriers!)

- we trimmed whitespace from container indicators and barcodes so that ArchivesSpace could match on common containers.

- We’ve been wanting to replace Roman numerals in our series identifiers to Arabic for a while now — this seemed like a good chance to do this.

Containers before migration — fauxcodes

Frankly, I’m not super-proud of this because it feels kind of hacky…

As documented elsewhere, we’re moving into a version of ArchivesSpace with a plug-in to help us keep track of circulating containers. A requirement for this plug-in was to deal with all incoming data, in whatever form it comes (migration from AT or Archon, folks already in ASpace, using the rapid data entry tool, importing EAD — all of it) and turn containers into modeled containers.

The container management plug-in assumes that within a resource record, any container with the same barcode refers to the same thing. If there is no barcode, it assumes that any container called “Box 10” (for instance) refers to the same thing. In most environments, this is absolutely true. However, at Manuscripts and Archives, we have a long tradition of re-numbering boxes at each series. Here, box 10 in series one is different from box 10 in series three, and we wanted to make sure that the database models it this way.

We have barcodes for 122,263 containers in our repository, so for the most part, we have no reason to sweat this. But we still have 11,873 distinct containers that don’t have barcodes, and it would be bad to model the two distinct box 10s as a single box 10.

So, what I really needed to do was either barcode more than 10 thousand containers (oh hell no) or give them a barcode-like designator that made differences clear.

Hence, fauxcodes. The fauxcodes concatenates the call number, the series indicator, and the container number. It accounts for the weird shit that happens when you try to concatenate something stored as a null vs. something stored as a blank (I learned about this the hard way). It distinguishes my box 10s.

I also had to deal with boxes where the folder number was shoved into the box number field, to make sure that ASpace didn’t consider that a distinct box…

We ran fauxcodes immediately before migration. We also deleted fauxcodes immediately after migration, because while they’re doing the work of barcodes, they’re not actually barcodes and someone may want to give those containers real barcodes someday.

Deleting rogue data

This may have been the result of being early adopters of Archivists’ Toolkit, or someone may have just done some ill-considered SQL updates back in the day, but we had quite a bit of rogue and orphan data in AT that we had to deal with. Some of it we left and it simply didn’t migrate — other data caused migration errors and had to be dealt with. Doing these kinds of deletes is pretty scary (especially considering that AT isn’t a very well-documented database), so my best advice is to always avoid non-canonical data updates in your production system so that you don’t have to deal with these kinds of problems later.

Make changes to the accessions table

Alright, another word of wisdom (and this is just my opinion — others may disagree). If you’re in a database, use fields for what they’re intended for. Figure out if you REALLY need those user-defined fields, and if you do, and there’s a compelling archival practice argument behind it, maybe start with a change request to the core software. Don’t ever re-name existing fields. You will have to migrate someday and the data in the new fields won’t make any damn sense.

We found ourselves in a situation where two of the repositories on campus (Manuscripts & Archives and Beinecke) put ENTIRELY different data into the same database fields in Archivists’ Toolkit. We contracted with Hudson Molonglo to fix the bulk of this with a post-migration script, but there were a couple of bits and bobs that we needed to fix with SQL beforehand. In a series of painful updates, this one wasn’t sooo bad, but it was actually a huge pain in the neck to just figure out what the differences were between the different AT databases. Hat tip to Mike Rush for doing most of that brain work.

Cleaning up extent lists

Extent values are the hall closets of archival description — all sorts of potentially-useful but super-ugly crap ends up there. We did a little bit of merging before migration, but there’s going to be a lot more in ASpace.

We also took a moment to decide what the value of a controlled value list is, and I think that we came to a really smart conclusion. Controlled values are really good for reporting. Hence, let’s only put stuff in there we want to report on. We decided that the controlled value lists should include space occupied (or storage occupied, in the case of electronic records), A/V carrier types, and a few categories of records used for accessioning purposes. That’s it.

But since SOMETHING is required to be put in the drop-down extent value list, we had to do a slightly dumb hack — “See container summary” is now a value in that field. I’d sort of like for ArchivesSpace to revisit how that subrecord is modeled, but not today.

We didn’t clean up everything, not by a long shot. The ArchivesSpace extent list hall closet is still full, and cleaning it out is going to be pretty tricky. We’ll be sure to post techniques for clean-up as we develop them.

Cleaning up levels

There was definitely a problem with the AT EAD importer, setting level=’otherlevel’ and otherlvel=’¯\_(ツ)_/¯’. At Manuscripts and Archives, we decided that in this case, we could happily set otherlevel= null and level=’file’. Mark took a closer look at the BRBL database, and I made changes in the Collections Collaborative database by hand. There were also data entry errors and errors of description. We cleaned up all of this before migration.

Cleaning up container profiles

Again, this is a Manuscripts & Archives-specific thing, but since we had all of this information about box types, we needed a strategy for making container profiles. This work is on GitHub. We had an actual human worker (Thanks, Christy!) measure each container type to get container profile dimensions. They’re now in ArchivesSpace.

Setting up ASpace

For the first migration, we had to make sure that the application and plug-ins were ready to go. The documentation on GitHub is pretty good. Don’t forget to run the database set-up script!

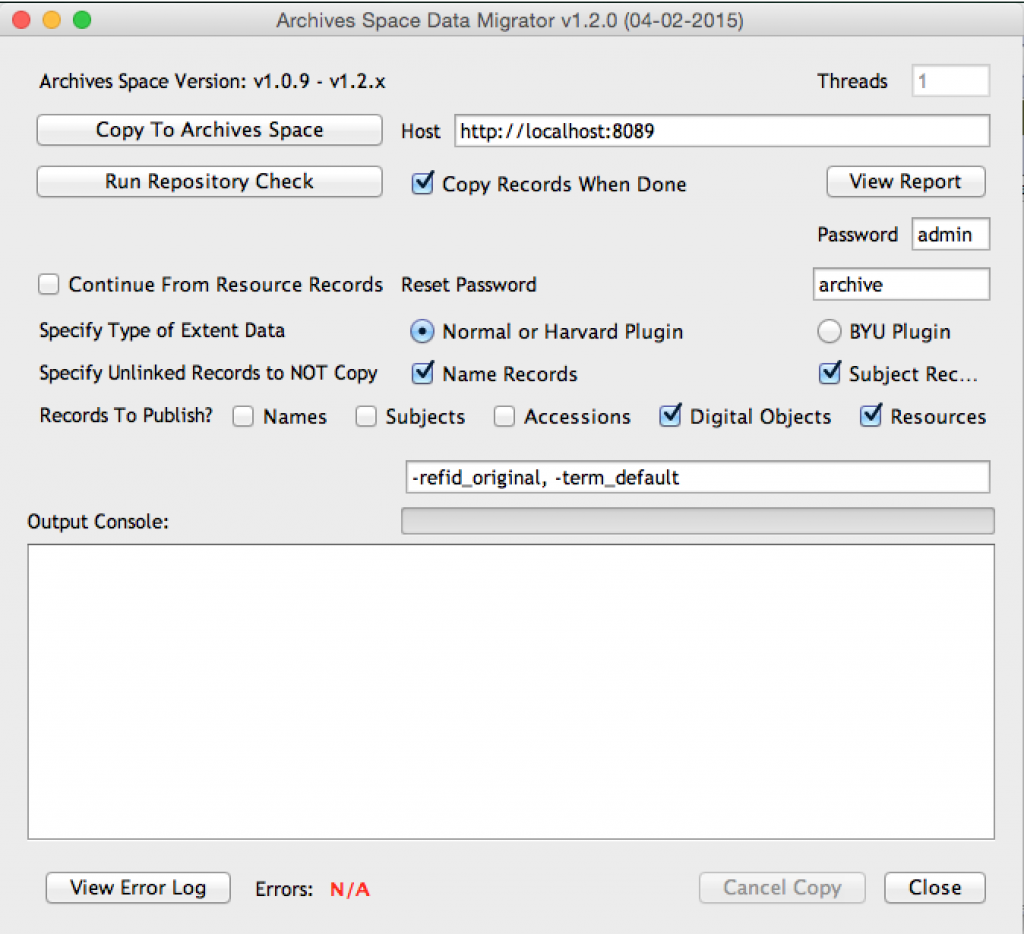

Clicking the button

The actual migration happens as a plug-in to Archivists’ Toolkit.

At some point, you just have to click the button and let it go…

Data integrity checking

The migrator tool will tell you which percent of each record type migrated, but we definitely found it to be helpful to go deeper and check archival objects, notes, instances, and the logic of the migration itself. We did a really deep dive into 0.1% of each record type, and I’m glad we did — we uncovered a number of migration logic errors which I hope to see fixed by the migrator. We ended up doing some SQL updates straight from AT to ASpace to ameliorate these, too.

- RightsTransferred note. I wrote a couple of fussy emails about this to the listserv about what’s wrong with this, which you’re welcome to read. We basically decided to move this data straight from the AT database into the use restrictions field in the Accessions table, since we didn’t have enough structured data to make an accurate rights record. I’m also pretty leery of the data model for rights records in ASpace and how we might want to use them, but that’s another blog post.

- Other blank values set to default. This is something that Kate Bowers and the folks at Harvard very helpfully pointed out. Here, we did some queries to see where ASpace had invented values so that we could set them back to blank or a neutral value.

We were very glad to have done migration on localhost to make these kinds of updates just a bit easier.

Now, delete users

User records migrated over from AT. Since we’re moving to LDAP authentication and log-in, we deleted them from the database to help avoid duplicate usernames and unsecured log-in.

Delete fauxcodes

Fauxcodes only went in so that ArchivesSpace would know which components could be linked to the same top containers. Their work is done now. Let’s all forget about fauxcodes.

Pulling in data from MSSA’s extended instances table

Now we come to the reason why we did the container management work in the first place — my repository had gone rogue and was storing data in AT that wasn’t supported by the core AT project and had no place to go in ArchivesSpace. For its time, it made sense — we needed better information stored about container types and integration with the ILS.

We contracted with Hudson Molonglo to build this plug-in so that the data would have someplace to go. Ever since it’s been built, we’ve been on a tireless crusade to get ArchivesSpace to bring this work into the core code so that we’ll never be on the hook for a project like this again.

So, there are three (really four) data elements in the extended instance table that needed a place to go (container restriction boolean, holdings/bib id, and container type). We found places for this data in the form of the more muscular conditions governing access notes, top containers, and container profiles, but we still needed a mechanism to bring that data across. The geniuses at HM took care of that for us. This script took about five hours to run in my environment. I don’t think anyone else out there did what we did with AT, but if you did, there’s how to fix it.

Doing the ASpace-specific bits of the Container Profile update script

This is the bit where dimensions get added. It’s worth saying again that this was my first time doing this kind of work — there were plenty of opportunities to make mistakes. We restored from back-up a few times.

Plug-in specific updates

Our Yale Accessions plug-in auto-creates accession numbers by taking the accession date and making the fiscal year that corresponds to the first part of the identifier, then letting the user choose a department code, and then auto-incrementing. In order for the department code picker to work, we had to add department codes.

Adding enumeration values

There were a couple of enumeration lists that needed values added to them so that data could migrate over from AT and end up in the right places. This was mostly related to our payments plug-in and material types plug-in.

The payments module uses an ISO list that tracks currency codes. Unfortunately, we had some non-ISO-compliant values (French Francs and German Deutschmarks, for instance). This enumeration list is non-editable in the application, but James Bullen (because he is rad) wrote a ruby script that would let us make this list editable and then add values. This is way quicker than going through the application, and I’ve been using this script a lot lately. It lives here. Here’s what adding these currency codes might look like like:

ruby enumangler.rb -s currency_iso_4217 -e ruby enumangler.rb -s currency_iso_4217 -v FRF,DEM,XBT ruby enumangler.rb -s currency_iso_4217 -u

The first line makes the enumeration list editable, the second adds the values and the third makes it uneditable again. Obviously, there are really good reasons to not edit a list that’s supposed to be un-editable, so be careful.

Run the accession table post-migration script for BRBL

Again, you’re better off not putting yourself in this position, but if you DO, know that there are awesome programmers out there like the folks at Hudson Molonglo who can get you out of it. We had originally planned on doing this ourselves with spit and bailing wire and some SQL updates and that was definitely not the way to go. Leave heavy lifting in the database to the professionals.

More BRBL clean-up

Many systems ago, blank fields were migrated as “.”. Mark went through and deleted these from unitdates, but since notes are stored as hex strings, he had a really hard time updating those.

Updating the accession audit trail

I wrote about this in another post — basically, since accessioning is an activity that is closely tied to people and dates, we wanted a way to bring over the date created, date updated, created by and updated by values from Archivists’ Toolkit. It was easier than I thought it would be. This script also became the basis for other cross-database updates we had to do.

Check to make sure everything works

Here was our checklist:

- export ead (transform with Yale EAD BPG style sheet, validate)

- import ead

- bulk container update

- create each type of record

- add user

- back up the database

- test reports

Finally

We made a copy of the database and a back-up of the solr index and sent it off to our sysadmin. I don’t think we immediately went out for a beer, but we should have.

Staffing the migration

This migration was done by archivists — Mark Custer and myself — not IT professionals. Upon reflection, I would encourage anyone in our position, knowing that they’re facing a complex process to find (beg, borrow, steal, kidnap, whatever) an IT professional at your organization and make sure that she is just as engaged in the mechanics of the migration as you are. While we’ve been very happy with the level of engagement we’ve gotten from the IT professionals on our committee and from our server admins, I think that we took too much on ourselves. We should have brought IT in sooner and we should have made sure that the organization as a whole allowed for us to have more of their time. Instead of Mark and me sitting for hours at a time in a meeting room, diagramming the steps of the migration, it would have been good for it to have perhaps a sysadmin there, too, who might have known ways that we could have made this easier on ourselves.

In the end, Mark and I did a very fine job (if I do say so myself), but we’re now hitting a point where the folks who have to support this application know a lot less about it than Mark and I do. It might have been better to find other ways of keeping them in the fold earlier in the process.

Staying organized

We had a shared google doc with a running checklist of everything that needed to happen, in order. We also made good use of a white board. Mark and I now work about a mile away from each other, and even though this was an entirely digital process, it was so important that we work together face-to-face. The rooms at the Beinecke’s new technical services space are extremely comfortable and handsome — they did a great job configuring the workspace for the realities of collaboration (and the need for natural light!)

Someday it won't feel like this anymore. pic.twitter.com/BMFGYWTw72

— Maureen Callahan (@meau) May 12, 2015